|

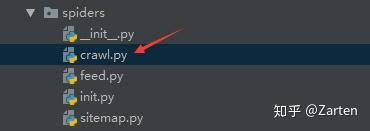

Link extractors are used in CrawlSpider spiders through a set of Rule objects. LxmlLinkExtractor.extractlinks returns a list of matching Link objects from a Response object. The init method of LxmlLinkExtractor takes settings that determine which links may be extracted. We have a running offer of 1000 API calls completely free. A link extractor is an object that extracts links from responses. Proxies API also handles automatic retries, automatic User-Agent-String rotation, solves CAPTCHAs behind the scenes using a simple one-line API call. So if you want to scale reliably to thousands of requests, you can consider using a professional Rotating Proxy Service like Proxies API to route requests through millions of anonymous proxies and prevent all the blocking problems. If you want to scale a web crawler like this to thousands of downloads and if you do it frequently, you will find that you will eventually run into rate limits, CAPTCHA challenges, and even IP blocks pretty soon. It should output all the links that point to the Lists of animals. Rule(LinkExtractor(allow=('List_of', ), deny=('bears', )), callback='parse_item'), ('Downloaded list wiki - %s', response.url) So in parse item, we are just going to print the URL that has been downloaded def parse_item(self, response): This tells scrapy to download all links inside the starting URLs that obey these rules and then call the function parse_item You can see that the deny array contains the word bear so we can stay away from anything bear-related! Rule(LinkExtractor(allow=('List_of', ), deny=('bear', )), callback='parse_item'), #the callback value tells the crawler to invoke the function parse_item below for further processing once the subsequent page is crawled # also it avoids anything to do with bears. # This rule set extracts any link which has the string List_of in it So now, let's take advantage of this and write this ruleset. You can see that they always have the string List_of in them. If you right-click and copy the link address of any of the links that point to other lists, they always seem to follow this pattern. The allowed_domains variable makes sure that our spider doesn't go off on a tangent and download stuff that's not on the Wikipedia domain. We will also need the LinkExtractor module so we can ask scrapy to follow links that follow specific patterns for us. This loads scrapy and the Spider library. Then create a file called fullspider.py and paste the following.įrom scrapy.spiders import CrawlSpider, Ruleįrom scrapy.linkextractors import LinkExtractor We dont want bears.įirst, we need to install scrapy if you haven't already. Also, we will avoid any pages that link to pages that talk about bears.

We are going to try and spider through this by first downloading this page and then further downloading all further links that point to a subsequent list. Here is the URL we are going to scrape, which provides a list of lists of different types of animals. So let's see how we can crawl Wikipedia data for any topic. Yield scrapy.Request(url, self.Scrapy is one of the most accessible tools that you can use to crawl and also scrape a website with effortless ease. Url = response.urljoin(next_page.extract()) Next_page = response.css("ul.navigation > li.next-page > a::attr('href')") The following example produces a loop, which will follow the links to the next page.ĭef parse_articles_follow_next_page(self, response):įor article in response.xpath("//article"): The regular method will be callback method, which will extract the items, look for links to follow the next page, and then provide a request for the same callback.

Using this mechanism, the bigger crawler can be designed and can follow links of interest to scrape the desired data from different pages. Here, Scrapy uses a callback mechanism to follow links. This was not another step in your Web Scraping learning, this was a great leap. That we have to filter the URLs received to extract the data from the book URLs and no every URL.

Parse_dir_contents() − This is a callback which will actually scrape the data of interest. Today we have learnt how: A Crawler works. Response.urljoin − The parse() method will use this method to build a new url and provide a new request, which will be sent later to callback. Parse() − It will extract the links of our interest. The above code contains the following methods − Yield scrapy.Request(url, callback = self.parse_dir_contents) For this, we need to make the following changes in our previous code shown as follows −įor href in response.css("ul.directory.dir-col > li > a::attr('href')"):

In this chapter, we'll study how to extract the links of the pages of our interest, follow them and extract data from that page.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed